[ad_1]

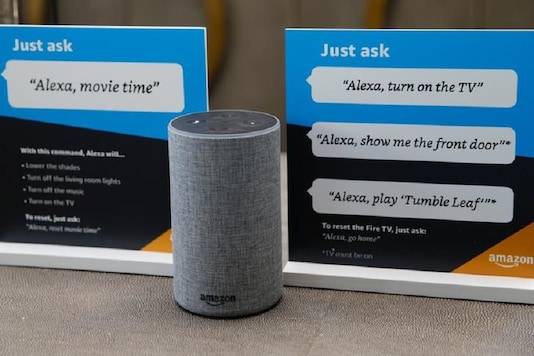

Image for illustration. (Photo: Reuters)

The researchers have concluded that that is possible a deliberate engineering design to make for easy end-user response. However, this additionally seems to make for privateness considerations.

- Information18.com

- Last Updated: July 2, 2020, 6:58 PM IST

- Edited by: Shouvik Das

With extra customers taking to voice activated units with assistants comparable to Amazon Alexa, Google Assistant, Apple’s Siri and Microsoft Cortana (the latter on a a lot smaller scale), a gaggle of researchers from Ruhr-Universitat Bochum and the Max Planck Institute for Cyber Security and Privacy in Germany has claimed to have recognized over 1,000 phrases that appear to by chance set off a response from every of those assistants. The research instantly ties into privateness considerations concerning such audio system, which have again and again been famous to be listening in on personal or delicate conversations by being by chance referred to as to motion with a fallacious name phrase. Now, the researchers behind this research consider that this can be a deliberate engineering design that has been accomplished to maintain good residence merchandise extra responsive, relatively than resistive.

To uncover this, the researchers positioned these audio system in a room and performed runs of standard TV reveals comparable to Game of Thrones, House of Cards and Modern Family. While these reveals had been being performed, the researchers took observe of each time the sunshine got here on for any of those good audio system, thereby signifying that they had been referred to as to motion. They would then roll again and play the sequences that appeared to set off these audio system, and be aware of particular phrases that had been activating these merchandise, as a part of a really fascinating research that will throw mild on crucial enhancements which are desperately required in good residence merchandise with embedded microphone, connectivity, AI and voice recognition capabilities.

For Amazon’s Alexa, the analysis discovered that the clever assistant was being activated by generally used phrases comparable to ‘unacceptable’, ‘election’, ‘a letter’ and ‘tobacco’. Apple’s Siri appeared to reply to phrases comparable to ‘a metropolis’ and ‘hey Jerry’. Google Assistant, which is current in each Android smartphone as we speak, in addition to Google’s host of good residence {hardware}, responded to phrases comparable to ‘OK cool’ and ‘OK, who’s studying?’. Microsoft’s Cortana, in the meantime, would additionally reply to being referred to as ‘Montana’.

While among the unintentional name phrases are outright humorous however nonetheless comprehensible, it represents a safety concern that these key phrases could set off the audio system into recorded surprising bits of on a regular basis conversations. This created appreciable furore when it was uncovered that every of Amazon, Apple, Google and Microsoft employed third celebration human contractors, alongside their AI mannequin, to vet snippets of audio recordings scrolled from these audio system, ‘for high quality monitoring functions’. While the entire firms have since vouched that they’re not using people or outsourcing their work, having good audio system activate in conditions the place they don’t seem to be intentionally referred to as nonetheless makes for a critical safety breach.

[ad_2]

Source